Steps to create Performance Testing Scenario (for PO) for PTF Team

Submitting Performance Test Scenarios to PTF team

What type of performance tests to assign to PTF team

- Please see Types of tests

Prior to recording and creating test scenario:

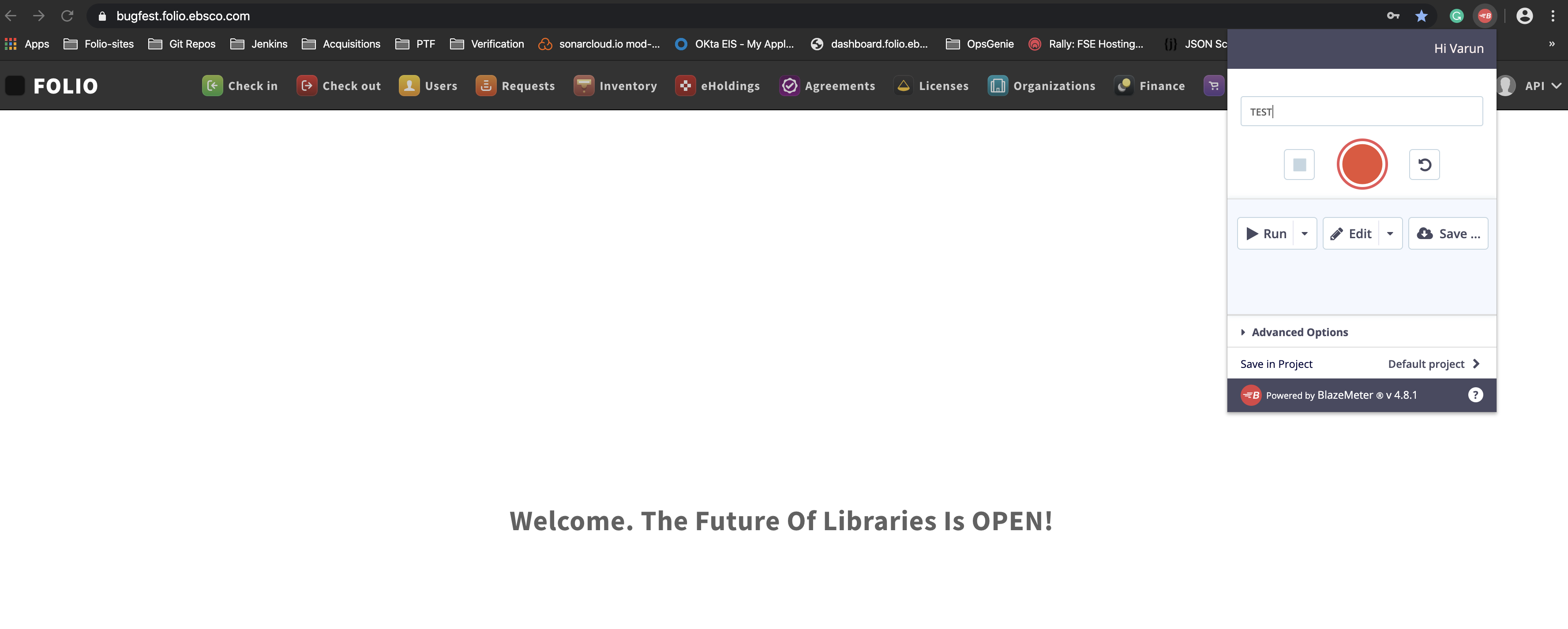

1. Install open-source Blazemeter Chrome plugin

- The plugin can be downloaded from https://chrome.google.com/webstore/detail/blazemeter-the-continuous/mbopgmdnpcbohhpnfglgohlbhfongabi/related

- You will have to sign up to start using it. Sign-up is free.

2. Use/install screen recording tool to record all your steps of the scenario

- Screen recording should be started before you start recording with the chrome plugin. Use screen recording tool you are comfortable with or the one suggested below.

- Windows tools for screen recording: https://getsharex.com/

- Mac OS tool: native quick time

- Web-based: https://screencast-o-matic.com/

3. Setup data for the scenario. NOTE: This may take most of your time in setting up the scenario and it may require a discussion with your development team or PTF.

4. Go through the scenario on the selected ENV to be sure that during the recording there will no be issues related to Data Set or others

Record test scenario

5. Start recording the screen with selected screen recording tool

6. Start recording scenario with the Chrome Plugin (Blazemeter)

- Type short name of your scenario, like: searching certain book on Spanish language (name of the scenario should give high level understanding of the scenario)

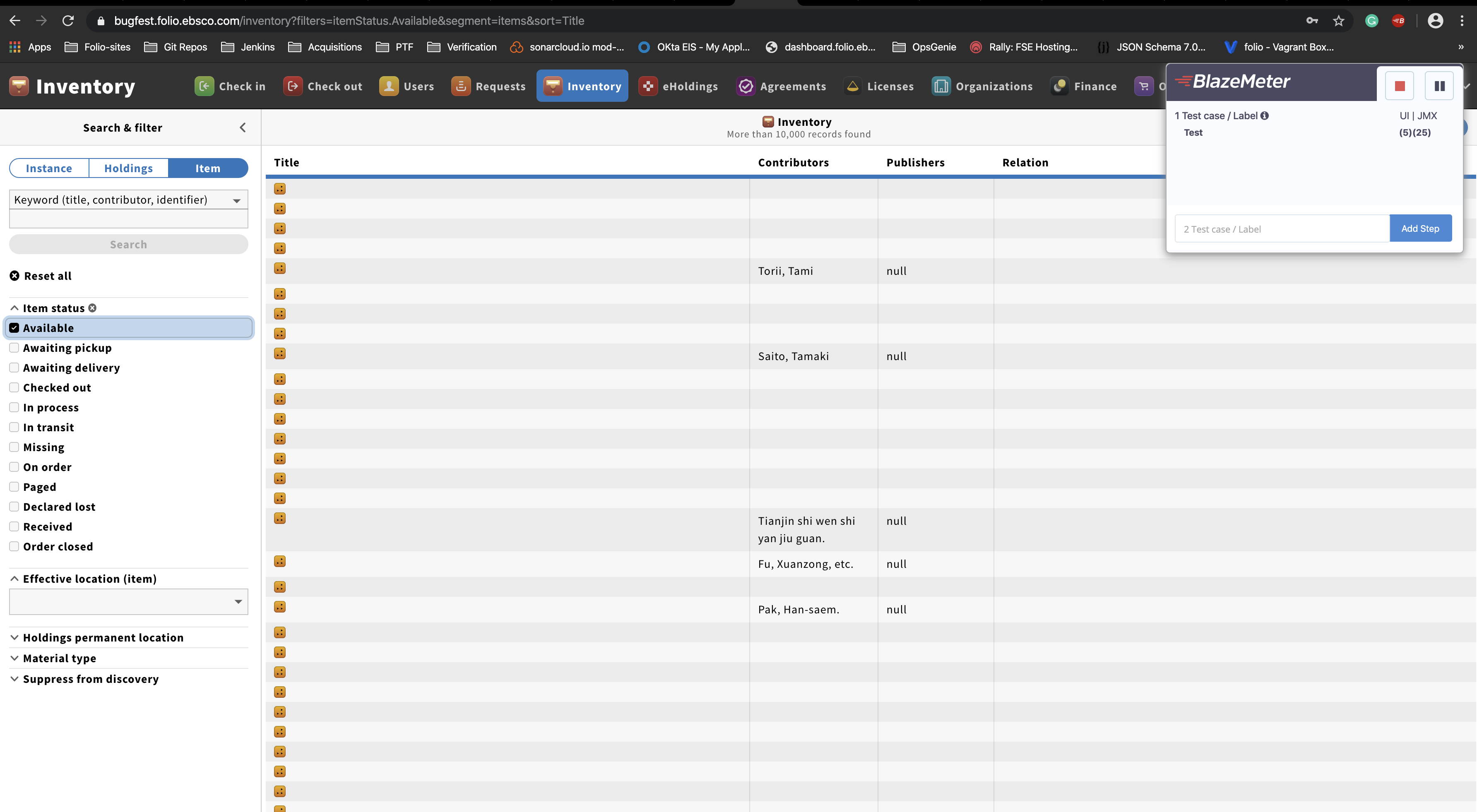

- Start recording: execute the workflow

7. Stop recording screen (screen recording tool) and test scenario (Blazemeter).

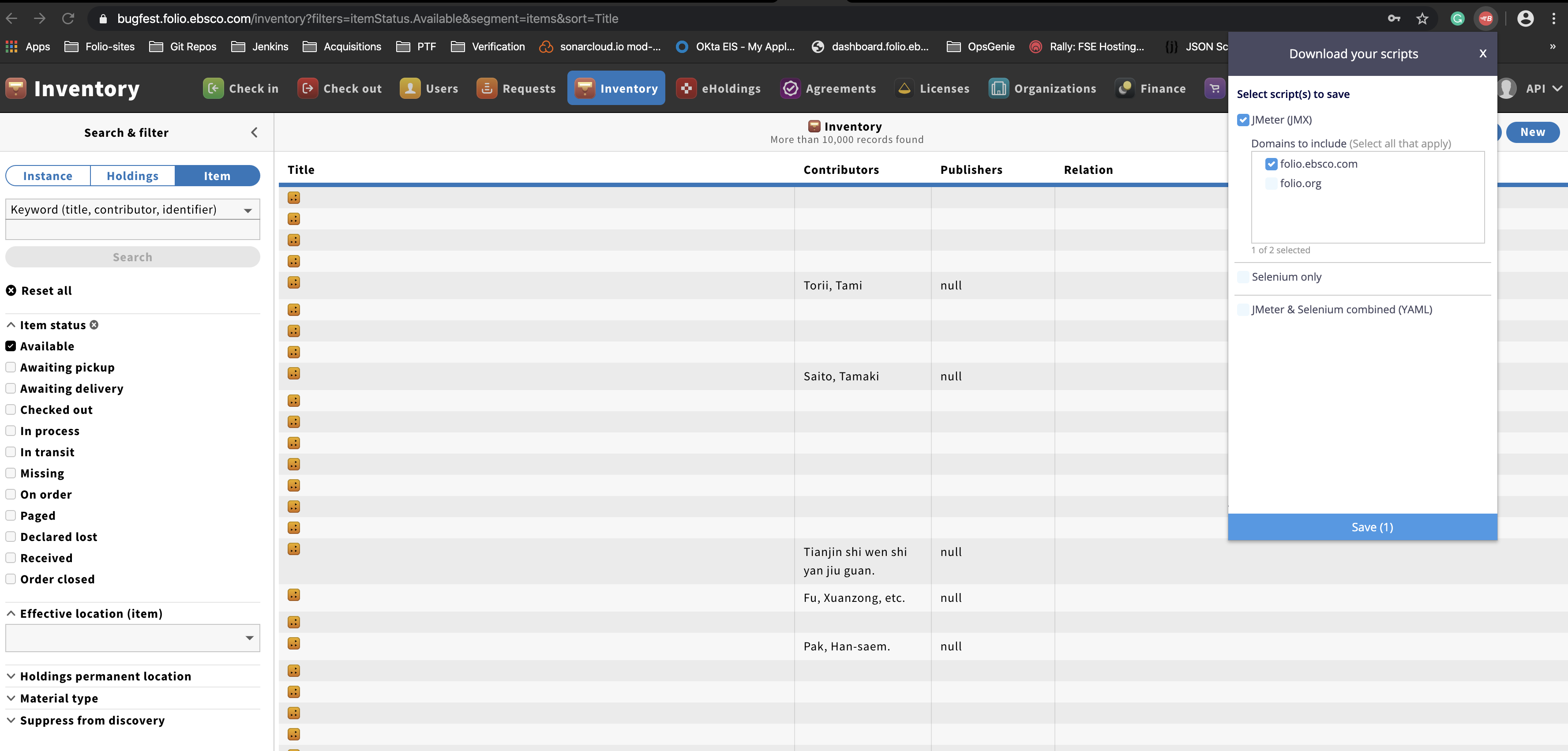

8. "Save" the recorded scenario to JMX file:

JIRA Flow

- Create user story

- Assign to applicable development team. Based on test scenario, team will decide if they should run the test or if it requires PTF. If it requires PTF then apply the label "PTF-Review"

- PTF clones the story and moves it to the PERF project while linking to the original ticket. PTF investigates and tests.

- If there is an issue, PTF creates a task for the original dev team to execute, linking to PERF and original tickets.

- Once test completes, PTF will provide a report to development team. See reports.

JIRA Tickets

- Name of the Jira issue should be similar as Name for the Test Scenario, no need exact match - High level description of the scenario. Description should contain:

- App name/area of issue (required)

- Brief statement of issue (required)

- How many users at any given time (required)

- Institution size to test with — (NEED details from PTF as only applicable for complex workflows)

- Volume of data (required)

- Whether to test in single environment / multi-tenant / or both (required)

- Whether checkout / checkin transactions should be considered in testing (required)

- Whether data import should be considered in testing. If testing multi-tenant, whether multiple data imports jobs should be running concurrently. (required)

- Expected response time (if known).

- Main modules that are involved in the process (if obvious or if known)

- Any specific settings or items or scenarios (ex: exporting MARC bib records was going on while doing a check-in, or a checking out an item that has 10 requests attached to it)

- A list of the backend software modules (to obtain this list, go to Settings → Software versions, and copy & paste the middle column "Okapi Services" into a file and attach it to the JIRA. Another way to do this is while doing step #5, go to this page and scroll down slowly so that the versions are captured on the video)

- Add the priority for the task

- Add notes when you expect the scenario should be tested level month

- Attach Scripted Scenario from the chrome plugin to the Jira issue

- Attach recorded Screen video to the Jira issue.